The Hidden Cost of Images in AI Agent Context Windows

675,000 dead images. 573 billion wasted tokens. 2.3 terabytes of redundant data — every single day.

The Problem Nobody Talks About

Every AI agent framework that supports images — OpenClaw, LangChain, CrewAI, AutoGen — stores them as raw base64 in session history. When an image enters a conversation, it stays there. Forever. Getting re-sent to the API on every single turn.

That screenshot your agent received 40 turns ago? Still in context. Still costing tokens. Still eating your context window. And every compression tool on the market explicitly skips images.

OpenClaw's built-in /compact command? Skips images. Context Gateway? Skips images. Agno CompressionManager? Skips images. The pruning system literally says in the documentation: "Tool results containing image blocks are skipped (never trimmed/cleared)."

Everyone compresses text. Nobody touches the images.

The Math (Show Your Work)

Start with the OpenClaw ecosystem

OpenClaw crossed 250,000 GitHub stars in March 2026, surpassing React. With a conservative 5–10% active install rate for a daily-driver tool, that's 12,500–25,000 active instances.

Using moderate estimates:

- 12,500 active OpenClaw installations

- Average 3 agents per instance

- 37,500 total agent sessions

- Average 18 images per active session (from our production fleet of 10 agents)

Each image stored as base64 costs approximately 17,000 tokens. That's 60–100KB of raw encoded data per image.

But here's the part that hurts: images don't cost you once. They cost you every turn.

Every time the agent processes a message, the entire session history — including every image — gets sent to the API. A single conversation with 5 images costs 75,000+ extra tokens on every API call.

Scale that up

| Metric | Calculation | Result |

|---|---|---|

| Images across OpenClaw | 12,500 × 3 agents × 18 images | ~675,000 |

| Turns per session per day | ~50 (conservative for active agents) | |

| Image re-sends per day | 675,000 × 50 | 33.75 million |

| Tokens per image | ~17,000 | |

| Total tokens transmitted daily | 33.75M × 17,000 | 573 billion |

| Data transferred (base64 ~4 bytes/token) | 573B × 4 bytes | ~2.3 TB/day |

For context: 2.3 TB is equivalent to streaming about 750 hours of HD Netflix. Every day. Except nobody's watching — it's just screenshots from last Tuesday being re-tokenized by GPUs that have better things to do.

Where the Waste Goes

That 2.3 TB/day doesn't just vanish. Every redundant image re-send triggers a cascade:

- Network transfer — base64 data uploaded from the user's machine to the API endpoint

- API server parsing — the JSON payload gets deserialized, the base64 gets decoded

- Image processing — the vision encoder (CLIP/ViT) tiles the image into patches and converts to token embeddings

- Attention computation — every image token attends to every other token in the context window (O(n²) complexity)

- GPU memory — VRAM allocated to store and process image representations that will be immediately irrelevant

- Electricity — actual watts powering inference on pixels from a conversation that moved on 30 turns ago

- Carbon emissions — real CO₂ for literally nothing

And this happens on every turn, for every agent, for every image, all day long.

The API provider (Anthropic, OpenAI, Google) bears the compute cost. The user bears the token cost. The planet bears the carbon cost. Nobody benefits.

Why Can't Agents Just… Forget Images?

The current architecture makes this surprisingly hard:

Session history is append-only. When an image enters the conversation, it becomes part of the immutable session log. The model needs the full conversation history to maintain coherence, so you can't just drop messages.

Compaction summarizes text, not images. When OpenClaw's /compact command runs, it summarizes the text conversation into a shorter form. But images are binary blobs — you can't "summarize" raw base64. So compaction skips them entirely.

Pruning is too conservative. OpenClaw's session pruning system trims old tool results to save space. But it explicitly exempts image blocks, likely because removing an image the model might still reference could cause hallucination or confusion.

The result: Images are the one content type that enters the context window and never leaves. They're the cockroaches of the token economy.

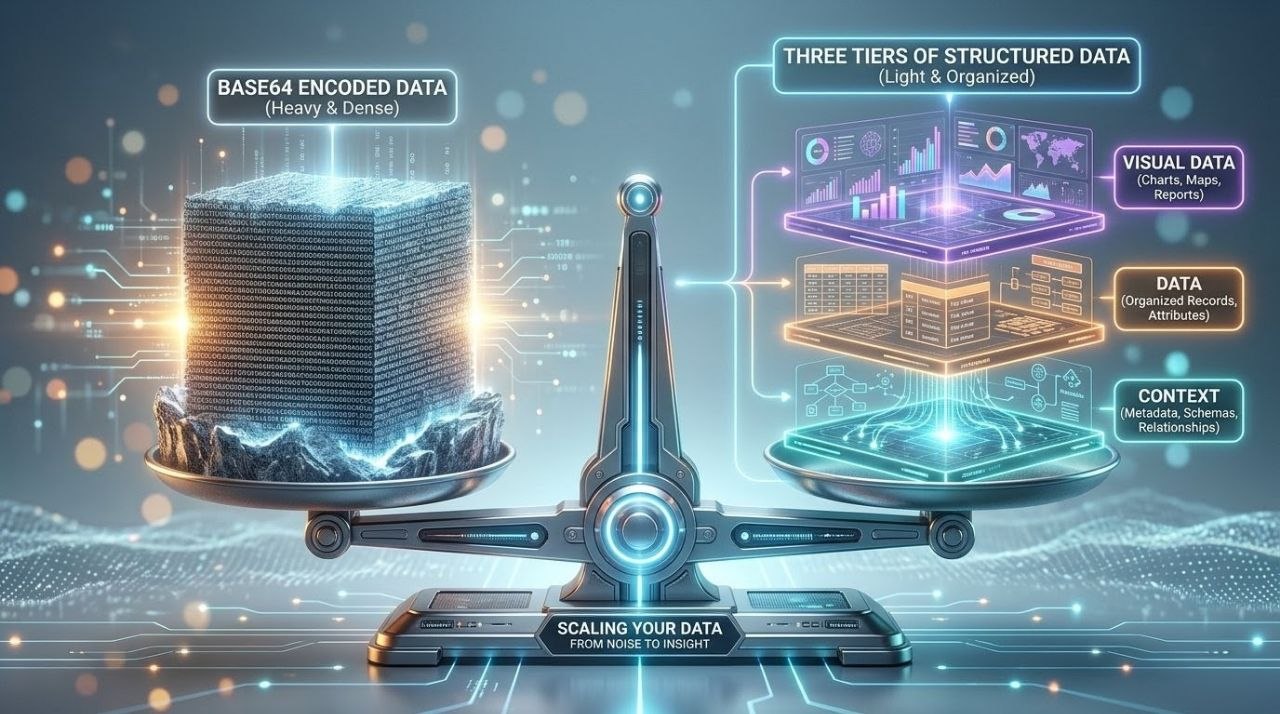

The Fix — Three-Tier Extraction

What if instead of carrying the raw image, you carried a complete text description of everything in it?

That's what Shrink does. It's the first multimodal context optimizer for AI agents. For each image in session history, Shrink:

- Reads the surrounding conversation to understand why the image was shared

- Sends the image to a vision model with a context-aware prompt

- Extracts three tiers of information:

- CONTEXT — why the image matters in the conversation

- DATA — every readable value (text, numbers, IDs, dates, amounts)

- VISUAL — design details (colors, layout, spacing, typography)

- Replaces the base64 blob with the structured text description

Before: ~17,000 tokens of opaque base64

After: ~500 tokens of searchable, referenceable, structured text

Six months from now, when someone asks "what was the exact PIN number on that property tax case?", the answer is right there in the DATA tier. With the raw image, the model would have to re-interpret pixels. With Shrink, it's just reading text.

Real Numbers from a Production Fleet

We built Shrink and tested it on a production 10-agent OpenClaw fleet on the same day:

| Agent | Images | Duplicates | Tokens Saved | Cost |

|---|---|---|---|---|

| Yancy (dev agent) | 121 | 28 | 2,193,034 | $0.049 |

| Wayne (trading) | 36 | 18 | 819,614 | $0.014 |

| Abby (food service) | 17 | 1 | 248,696 | $0.012 |

| Henry (mission control) | 5 | 3 | 74,931 | $0.001 |

| Mike (legal) | 1 | 0 | 33,459 | $0.001 |

| Total | 181 | 50 | 3,369,734 | $0.08 |

$0.08 to free 3.3 million tokens. The dedup detection caught 50 duplicate images (28% of total) and reused descriptions — those cost zero additional API calls.

ROI breakeven: 0.11 turns. The tool pays for itself before the first response completes.

The Scale of What's Possible

If every OpenClaw instance ran Shrink once:

| Metric | Before Shrink | After Shrink | Waste Eliminated |

|---|---|---|---|

| Tokens per turn (ecosystem) | 573B/day | ~17B/day | 556B tokens/day |

| Data transferred | 2.3 TB/day | 69 GB/day | 2.2 TB/day |

| GPU compute on dead images | ~100% | ~3% | 97% reduction |

| Estimated daily cost (Sonnet pricing) | ~$34,500/day | ~$1,035/day | ~$33,465/day saved |

And that's just OpenClaw. The broader agent ecosystem — LangChain, CrewAI, AutoGen, custom frameworks — multiplies these numbers by 10–50×.

What Needs to Happen

Short term (now)

Use Shrink. It's open source, takes 30 seconds to install, and works today.

clawhub install shrinkMedium term

Agent frameworks should build image lifecycle management into their context engines. Images should have a TTL — after N turns without reference, automatically extract and replace.

Long term

API providers should offer server-side image deflation. If Anthropic's API knows an image hasn't been referenced in 20 turns, it could automatically serve a cached description instead of re-processing the raw pixels. The inference savings alone would justify the engineering investment.

The current state — where every image persists at full fidelity forever — is a design artifact from when multimodal models were new and images were rare. In 2026, with agents receiving dozens of screenshots per session, it's unsustainable.

🦐 Try Shrink — Free & Open Source

Three-Tier Extraction™ · Dedup detection · Fleet scanning · JSON output · 12 CLI flags · Dry-run with cost estimates

clawhub install shrinkBuilt and shipped in one day. Open source forever.

Frequently Asked Questions

Does Shrink delete my images?

No. It replaces the base64 data in the session JSONL with a structured text description. A .bak backup of the original file is created automatically before any changes. The original image files on disk are untouched.

Can the agent still answer questions about shrunk images?

Yes — that's the whole point of Three-Tier Extraction. The CONTEXT tier captures why the image was sent, the DATA tier captures every readable value, and the VISUAL tier captures design details. The agent can answer questions about specific values, dates, IDs, colors, and layout — often better than re-interpreting raw pixels.

How much does it cost to run?

We shrunk 181 images across 10 agents for $0.08 total using Claude Haiku. Per image, it's approximately $0.0005–$0.001 depending on the model.

Does it work with models other than Claude?

Currently it uses the Anthropic vision API (Haiku or Sonnet). Multi-provider support (OpenAI, Gemini, local models) is on the roadmap.

What about the 2.3 TB number — is that real?

It's an estimate based on 250,000 GitHub stars, 5–10% active install rate, average image counts from our production fleet, and average daily turn counts. The actual number could be higher or lower, but the order of magnitude is sound. Even at half our estimate, that's still over a terabyte of redundant data per day.

Isn't this something Anthropic/OpenAI should fix on the server side?

Ideally, yes. Server-side image caching or automatic deflation after N turns would solve this at the infrastructure level. Until that happens, Shrink is the client-side solution that works today.